CrewAI captured something real about how engineers want to think about agents. The mental model of a crew (researcher, writer, manager, reviewer) collaborating on a task is intuitive, communicable, and easy to demo. Founder João Moura shipped the first version in 2024, the GitHub repo crossed tens of thousands of stars within months, and a commercial platform followed (CrewAI GitHub, retrieved 2026).

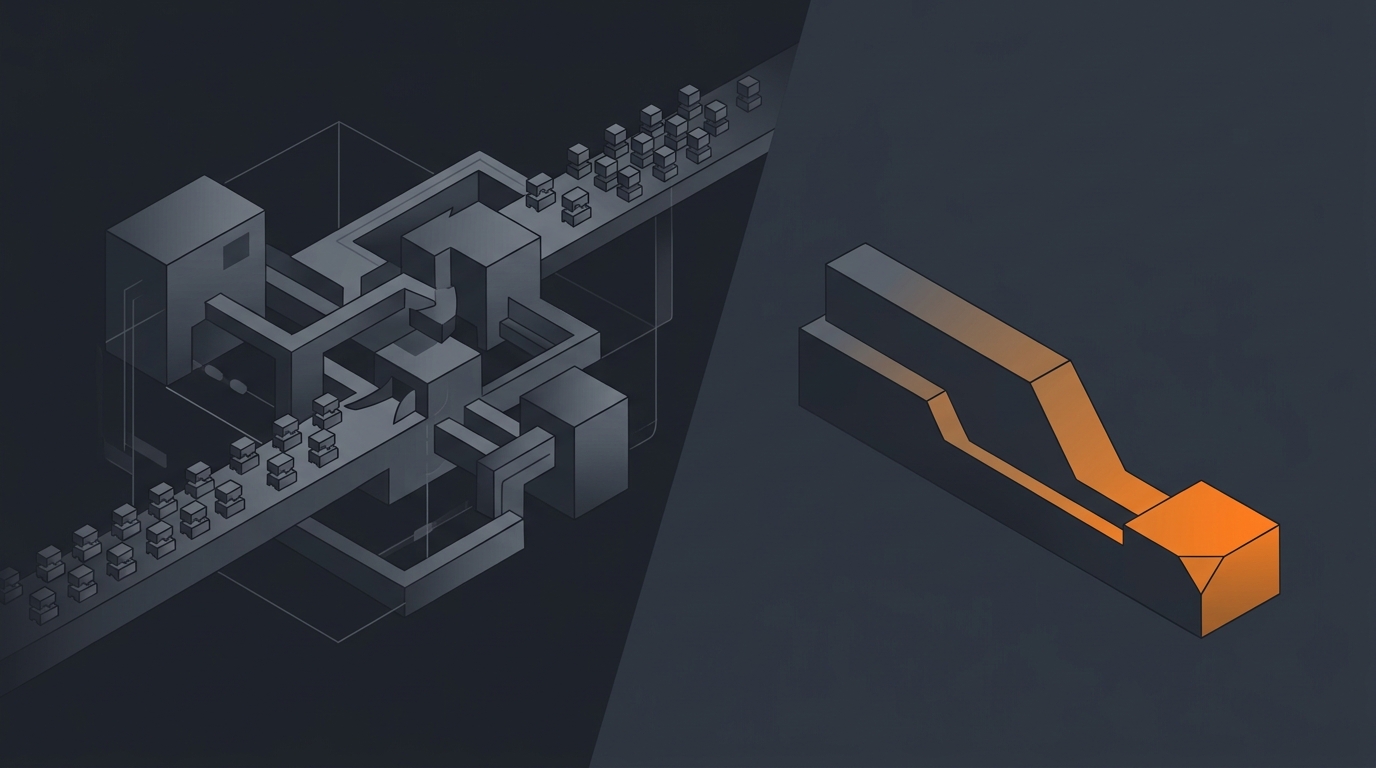

The framework is excellent. The default assumption that ops tasks should be done by a crew is the part I want to challenge. For most operational jobs, one well-designed agent ships faster, costs less, and fails less than a multi-agent crew. This piece walks through where the multi-agent thesis is genuine and where it is over-architected.

What CrewAI is in 2026

CrewAI is a Python framework that lets engineers define a crew of agents, each with a role, a goal, a backstory, and a tool set. A crew runs a task by passing context between agents in a sequential or hierarchical process. The metaphor maps cleanly to how a small team divides work, which is part of why CrewAI demos so well.

The crew metaphor

You write something like: a Researcher with web-search tools, a Writer with drafting capabilities, a Manager that coordinates them. You then assign the crew a task ("write a market analysis on the EV charging space"). The Manager delegates research, the Researcher returns findings, the Writer drafts the report.

The commercial product

The CrewAI team has built a commercial platform on top of the framework, with deployment, monitoring, and enterprise features. The platform makes the framework easier to operate, but the underlying mental model stays multi-agent.

The multi-agent thesis (and where it breaks)

The multi-agent thesis is appealing for three reasons: it mirrors how human teams work, it lets each agent specialise, and it produces clearer demos than a single-agent monolith. The thesis breaks down when applied to operational work for three opposite reasons.

Tokens, not just compute

Every message between agents is tokens in and tokens out. A crew of five agents talking through a task generates substantial chatter. According to Anthropic's December 2024 engineering note on agents, "simpler patterns are easier to reason about, cheaper to run, and easier to evaluate" (Anthropic, 2024). The cost is real.

Error cascades

When one agent in a chain makes a mistake, the downstream agents reason on top of bad output. The Writer drafts a report from the Researcher's hallucinated findings. The Manager approves. The Reviewer signs off. The user reads a confidently-wrong report.

Debugging hell

Tracing why a crew did the wrong thing means reading a five-participant conversation. The failure mode is distributed across agents. A single-agent failure is local and inspectable. For the failure-mode framing see AI agent failure modes.

What Gravity does differently

Gravity defaults to one agent. The agent has a clear outcome and a tool set sized to that outcome. When it needs to consult another agent (rare), it can. Most of the time, it does not.

The bet behind this default: as frontier models improve, the marginal capability gain from multi-agent orchestration shrinks, and the cost penalty stays. One smart agent doing the whole job is the right shape for most ops work. For the framing see single agent vs multi-agent and multi-agent systems explained.

When you actually need multiple agents

Three places multi-agent is genuinely the right pattern.

Parallel research

"Cover ten companies in this market in parallel" benefits from parallel researchers. Each agent covers a slice, results merge at the end. CrewAI is good at this.

Creative pipelines

Writer, editor, fact-checker, layout. Different roles benefit from different prompts and different model choices. The hand-off model maps to the workflow.

Red-team / proposer-critic patterns

One agent proposes a solution, another critiques it. The critique improves quality on tasks where one-shot reasoning misses edge cases. The pattern is well-supported by research.

Capability comparison

| Dimension | CrewAI | Gravity |

|---|---|---|

| Surface | Python framework + platform | Finished product |

| Default agent count | Multiple (crew) | One per outcome |

| Cost predictability | Scales with crew chatter | Bounded per task |

| Failure recovery | Per-agent, cascades possible | Single recovery loop |

| Best use case | Research, creative, exploration | Ops, recurring, deployed work |

| Buyer | Engineering team | Founder / ops lead |

The hidden cost of multi-agent orchestration

Three concrete cost lines that most CrewAI demos do not show.

Inter-agent token consumption

The agents talk to each other. Each message is tokens. A five-agent crew running for ten minutes can consume more tokens than a single agent running the same task for fifteen minutes. The runtime feels longer because of the back-and-forth, and the bill is higher. See AI agent cost models.

Cascade failure modes

One agent hallucinates, downstream agents accept and build on the hallucination. By the time the output reaches the user, the original error is invisible behind three confident citations. Single-agent failures are easier to catch because there is one place the truth got bent.

Debugging cost

Engineers debugging a multi-agent failure read five participants' transcripts. The cognitive load is high. The fix is often unclear (which agent's prompt was wrong? which routing decision? whose tool call?). Operational platforms invest in single-agent observability because the failure surface is simpler.

Where CrewAI is the right choice

Three categories.

Research crews

Parallel exploration of a question. Multiple agents, each covering an angle, merging at the end. The pattern is appropriate; the cost is justified.

Custom in-house orchestration

You have an engineering team that wants to build a bespoke multi-agent system. CrewAI gives you the primitives without dictating the product shape.

Exploration and prototyping

You want to test what multi-agent buys you for your specific workload. CrewAI is a clean framework for that experimentation.

Where Gravity is the right choice

Three opposite categories.

Production ops

Deployed daily work where reliability, cost, and audit matter more than elegance. One agent, one tool set, one recovery loop. See AI agent orchestration.

Single-task discipline

The temptation to add more agents is real, and it costs you. Gravity's default is restraint: one agent per outcome, expand only when the evidence supports it.

Business users

Founders, ops leads, salespeople. They do not want to debug a crew transcript. They want the work done. See describe outcome, not workflow and three startups, three shutdowns.

Frequently asked questions

What is CrewAI?

CrewAI is an open-source Python framework for orchestrating role-playing autonomous AI agents, founded by João Moura in 2024. The mental model is a 'crew' of agents (researcher, writer, manager, reviewer) that collaborate on a task. The framework is MIT-licensed and has grown a significant community; the team launched a commercial CrewAI Platform in 2024.

When do you actually need multiple agents?

Multi-agent setups are useful for research-heavy tasks where parallel exploration helps (multiple researchers covering different angles), creative pipelines (writer, editor, fact-checker), and red-team patterns (one agent proposes, another critiques). Most ops tasks do not need multiple agents; the same job can be done by one well-designed agent with more reliability and less cost.

Is CrewAI a competitor to Gravity?

CrewAI is a framework for engineers; Gravity is a product. Different sides of the build-vs-buy line, similar to the LangChain comparison. CrewAI also has a multi-agent design philosophy while Gravity defaults to single well-designed agents for ops work. They appeal to different buyers solving different problems.

What is the hidden cost of multi-agent orchestration?

Three. First, token cost: every inter-agent message uses tokens, and chatter scales fast. Second, error cascades: when one agent in a chain misbehaves, the downstream agents reason on top of bad output. Third, debugging: tracing why a multi-agent crew did the wrong thing involves following a conversation across five participants, not one. Anthropic's December 2024 'Building Effective Agents' explicitly argues for simpler patterns for these reasons.

When does Gravity beat CrewAI for ops?

For deployed recurring tasks where reliability, cost predictability, and integration depth matter more than the elegance of the multi-agent abstraction. Lead follow-up, invoice chasing, KPI roll-ups, ticket triage. The single-agent pattern wins on cost and clarity; the buyer wants outcomes, not a crew chart.

Three takeaways before you close this tab

- CrewAI is a framework with a multi-agent default. Gravity is a product with a single-agent default.

- Multi-agent has hidden costs: tokens, cascades, debugging. Most ops jobs do not justify them.

- Use the right tool for the shape of work. Crews for research; single agents for ops.

Sources

- CrewAI, "GitHub repository", retrieved 2026-05-14, github.com/joaomdmoura/crewAI

- CrewAI, "Product page", retrieved 2026-05-14, crewai.com

- Anthropic, "Building Effective Agents", December 2024, anthropic.com/engineering/building-effective-agents

- João Moura, founder writings on X, retrieved 2026-05-14, x.com/joaomdmoura

- AgentBench, "Evaluating LLMs as Agents", 2023, arxiv.org/abs/2308.03688

- Aryan Agarwal, "Single agent vs multi-agent", May 2026, single agent vs multi-agent