Misrouted tickets are the silent SLA killer. A queue of 800 tickets where half land on the wrong team is a queue of 1200, because every wrong route costs a reassignment, a re-read, and a re-clock on the SLA timer. The team that owns the ticket on minute one is the team that resolves it on hour three. Anything else burns the response window and burns the customer's patience while it does.

This is the boring problem an AI agent is actually good at. Not "answer the customer", not "be a chatbot", but the unglamorous queue work of reading a ticket, deciding which team should own it, setting the right priority based on plan tier and SLA risk, tagging cleanly, and getting out of the way. The job is queue routing, not customer conversation. Done well it returns a clean queue to a support manager every morning. Done badly it adds a layer of confusion on top of an existing mess.

What this agent does

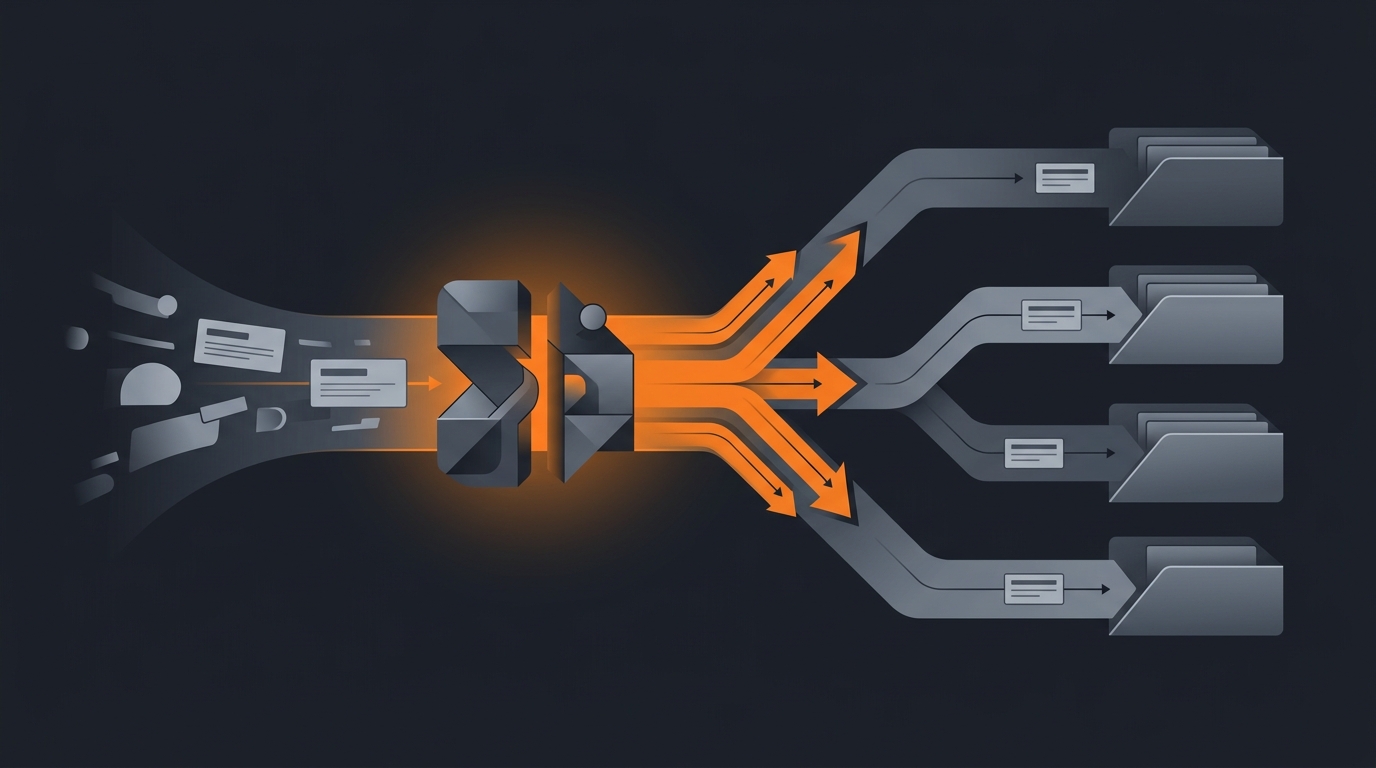

The agent subscribes to the Zendesk ticket.created webhook. For each new ticket it reads the subject and body, pulls the requester record, pulls the organization and plan tier, scans recent ticket history for the same requester, classifies intent across five buckets, scores sentiment, computes a priority score, and writes back four fields on the ticket: group_id, assignee_id (optional), priority, and tags. It also drops a private internal comment that explains the decision, including the confidence score and the two signals that drove it.

It is not a chatbot. It does not reply to the customer. It does not mark anything solved. It does not invent macros. For the broader read-then-route pattern across agent types, see what an AI agent can actually do.

The optional second mode: after the routing fields are set, the agent can draft a first response in a private "Notes" comment field for the assigned human agent to review, edit, and send. The draft is grounded in the help center articles for that intent class, not invented. Start with this mode off. Turn it on only after the routing has stabilized, and only on intent classes where the help center coverage is genuinely good.

Sources of truth

One source per field. The agent reads but does not maintain its own copies.

- Zendesk ticket. Subject, body, requester, channel, brand, ticket_form. Source of truth for the customer intent.

- Customer organization record. Industry, contract value, account owner, language preference. Source of truth for the relationship.

- Plan tier. Pulled from your billing system or the org custom field. Drives SLA and priority weighting.

- Prior ticket history. Last 90 days for the same requester. Repeat tickets get a different priority weight.

- Help center articles. If draft-response mode is on. Grounded retrieval, no invented answers. See how to train an agent on company docs.

What the agent does not read: marketing systems, product analytics, the customer's social media. Stay inside the support domain. The agent that reads everything ends up doing nothing well.

Classification and priority scoring

Intent classification is a five-bucket call: billing, bug, account access, refund, feature. A small percentage falls into "other" and gets routed to a catch-all queue for human triage. Five buckets are not a limitation, they are a discipline. More buckets make the model less confident on every call. Per the 2025 Zendesk CX Trends Report, AI-driven categorization is now standard practice across enterprise CX teams; the lesson from those deployments is that fewer, sharper categories beat many fuzzy ones.

Priority is a weighted score, not a category. The agent computes:

- SLA breach risk. Time-to-first-response remaining versus the SLA target for this plan tier. A ticket with 30 minutes left on a four-hour SLA scores higher.

- Sentiment. Angry words, urgency markers, escalation language ("cancelling", "lawyer", "still not working"). Mild to severe scale.

- Customer tier. Plan tier from the org record. Enterprise customers get a baseline lift; free-tier customers do not get dropped, they just lose the lift.

- Topic weight. Account access (cannot log in, locked out) and security topics get a baseline lift regardless of tier.

- History modifier. Third repeat ticket on the same issue gets a lift. The customer already tried the obvious thing.

The weighted score maps to Zendesk's four priorities: low, normal, high, urgent. Calibrate the thresholds against three weeks of historical tickets that have been hand-priorities by a senior agent. Do not invent the thresholds from intuition.

Routing rules

Routing is the boring deterministic step. Intent maps to a Zendesk group; priority maps to the assignee tier within that group; the org's account owner gets a CC when the customer is enterprise tier.

- Billing. Billing-Support group. Urgent or high goes to senior billing; low or normal goes to round-robin.

- Bug. Technical-Support group. Add the

needs-engineeringtag if the body mentions a stack trace, error code, or "500". - Account access. Trust-and-Safety group. Always at least high priority. Lockouts above two hours go urgent.

- Refund. Billing-Support group with a

refund-requesttag. Goes through the refund approval flow, not direct to assignee. See how to add a human approval step. - Feature. Product-Feedback group. Tagged but rarely high priority. The product team owns the response cadence.

Escalation triggers are explicit. If the body contains "cancelling my account", "lawyer", "press", "GDPR data request", or "security incident", the agent sets urgent and CCs the head of support regardless of intent. These are not edge cases, they are policy. The agent enforces the policy that already exists.

Tagging strategy

Tags are how the support manager runs the weekly report. Three tag categories.

- Intent tag. One of the five buckets, applied always. Drives the weekly volume report.

- Topic tags. Specific topic within an intent (sso, webhook, double-charge, stuck-export). Drives the recurring-issue report.

- Routing-decision tags.

agent-triagedon every ticket;agent-low-confidencewhen classification confidence is below 0.7;agent-reroutedif a human moves the ticket later.

The routing-decision tags are the audit layer. The weekly review reads tickets tagged agent-rerouted, looks at where they were sent versus where they ended up, and feeds the pattern back into the rules. For more on the observability side, see how to monitor agent activity.

Guardrails

Triage agents fail when their scope creeps. Five hard rules.

- Never close tickets. No

status=solvedwrites, ever. The agent has no mandate to decide a customer's problem is finished. - Never refund. Detecting a refund intent is fine; processing one is not. Refunds go through a separate approval flow.

- Never reassign macros. Macros are configured by the support manager. The agent reads them; it does not edit them.

- Never send public comments. The agent writes private internal comments only. If draft-response mode is on, drafts land as internal notes flagged for human send.

- Always leave an audit trail. Every ticket gets an internal comment explaining intent, priority signals, confidence score. No silent decisions.

For the broader scope-limiting pattern, see how to limit agent actions. For why guardrails are not optional, AI agent safety and guardrails covers the failure modes that show up at scale.

Common mistakes

- Twenty intent classes instead of five. Model confidence collapses, rerouting rate spikes. Fewer, sharper buckets.

- Priority as a category, not a score. A flat "billing equals high" rule misses the angry enterprise customer on day two of a lockout.

- Auto-send drafts on day one. The grounded response is only as good as the help center. Audit the help center first.

- No reroute metric. If you cannot measure the reroute rate, you cannot improve the agent. Tag every triage decision.

- Treating triage like Slack triage. Different problem. Slack DMs need a different agent. See AI agent for Slack triage.

- Forgetting language detection. A French ticket routed to an English-only group is a guaranteed reroute. Detect language before routing.

Frequently asked questions

What does an AI agent for Zendesk ticket triage actually do?

It reads each new ticket on the Zendesk Support API, classifies intent (billing, bug, account access, refund, feature), pulls customer plan tier and prior history, computes a priority score from SLA breach risk and sentiment, then applies the correct group, assignee, tags, and priority fields. It does not reply to the customer or resolve the ticket. The agent is a queue operator, not a chatbot.

How fast does the agent triage a ticket?

Under two minutes for the common case. The Zendesk webhook fires on ticket.created, the agent fetches the customer org and plan record, classifies the intent, scores priority, and writes back the group, assignee, tags, and priority via the Tickets API. The slowest step is the language model pass for intent and sentiment, which adds 800 to 1500 milliseconds. The rest is API latency and bookkeeping.

Will the agent ever close tickets or send refunds?

No. Hard rule. The triage agent has scope to set fields (group, assignee, priority, tags, ticket_form_id) and to add internal comments. It cannot mark a ticket solved, cannot send public replies, cannot trigger refunds, and cannot reassign macros. Closing tickets requires a human or a separate agent with its own approval flow. Keep the blast radius small.

How is this different from Zendesk's built-in triggers and automations?

Triggers fire on keyword rules and field values. They cannot read the actual intent of a ticket that says "my card was charged twice and now I cannot log in" (that is billing plus account access plus angry). The agent reads natural-language context, customer history, and sentiment, then makes a routing decision a trigger cannot. Use triggers for deterministic rules; use the agent for everything ambiguous.

What happens when the agent misclassifies a ticket?

It will. Plan for a 5 to 12 percent reroute rate in week one. The agent writes an internal note on every ticket explaining the classification, priority, and routing decision, so a human agent can fix the routing in one click and the audit trail is intact. Each Friday, sample the reroutes, look for patterns, update the rules or training. Triage agents improve with feedback, not with prompts.

Three takeaways before you close this tab

- Triage is queue routing. Not a chatbot, not a resolver, not a refund machine.

- Priority is a score, not a label. SLA risk, sentiment, tier, topic, history.

- Every decision is auditable. Internal note, tag, confidence score. Always.

Sources

- Zendesk Developer Docs, "Tickets API reference", retrieved 2026-05-12, developer.zendesk.com/api-reference/ticketing/tickets/tickets

- Zendesk Developer Docs, "Webhooks for ticket events", retrieved 2026-05-12, developer.zendesk.com/documentation/event-connectors/webhooks

- Zendesk, "CX Trends Report 2025", retrieved 2026-05-12, zendesk.com/customer-experience-trends

- Gartner, "Market Guide for Customer Service and Support Technologies", 2024, summary retrieved 2026-05-12, gartner.com/en/customer-service-support

- Aryan Agarwal, "Gravity Zendesk triage agent spec", internal v1, May 2026, About