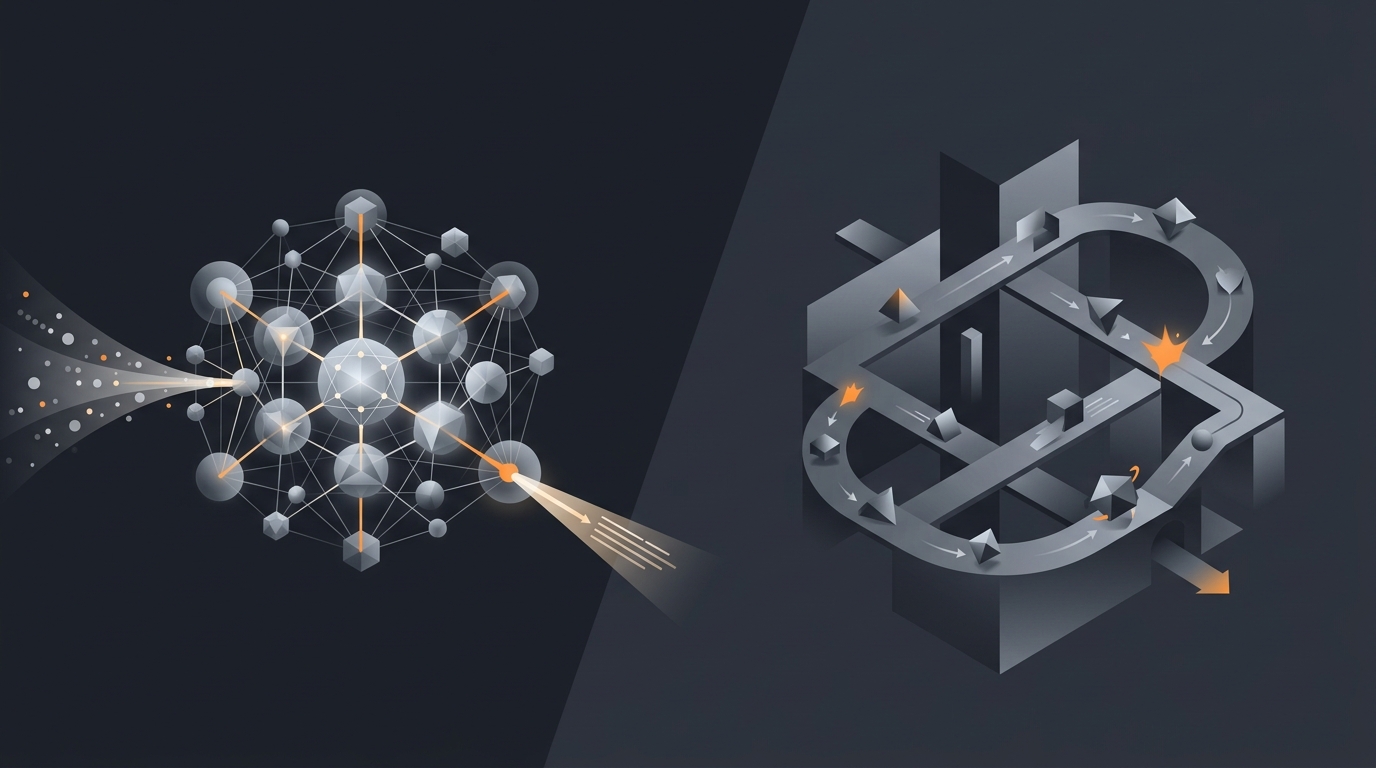

"Gravity vs Claude" is one of the most common search queries I see, and one of the most miscategorised. It is closer to "Toyota vs Bosch engines" than to "Toyota vs Honda". Claude is a foundation model family from Anthropic. Gravity is an agent platform that runs on top of frontier models, including Claude. They are not substitutes. The confusion is real, and it leads buyers to either underspend on a model API and expect agent behaviour, or to over-engineer on top of an API when a platform would have shipped faster.

This piece is not a feature war. It is a category map. I will explain what Claude is in 2026, what Gravity is, where the overlap is real, where it is not, and when each is the right purchase. I will also be honest about the fact that Gravity uses Claude as one of its reasoning engines, because pretending otherwise would be dishonest and frankly bad for buyers.

The category confusion (and where it comes from)

Anthropic shipped Claude as a chat product in 2023 and added Computer Use in October 2024 (Anthropic, 2024), Claude Code as a CLI in early 2025, and a richer Skills surface in 2025. Each of those product additions blurs the line between "model API" and "agent product". For a buyer scrolling Twitter, Claude looks like it does everything an agent platform does. The confusion has roots in three places.

The chat surface looks like an agent

Claude.ai is a chat product wrapped around the Claude model. Inside one conversation it can call tools, write code, search the web, run computer-use actions. To the user that is indistinguishable from "agent behaviour". But the unit of work is the conversation, not a deployed long-running task. Close the tab and the agent is gone.

The model has agentic capabilities

Computer Use, tool use, Skills, MCP servers. These are capabilities of the model, not deployments. The model knows how to emit a tool call. Something else has to be running that turns the tool call into an action and feeds the result back into the loop. See AI agent vs LLM for the deeper version of this argument.

Marketing copy is loose

Half the vendors in 2026 call their model API "an agent". Half the buyers call any LLM call "an agent". The terminology has decayed. The result is RFPs that compare a foundation model to a deployed multi-agent platform on the same line, which is like comparing a battery to a flashlight.

What Claude actually is in 2026

Claude is a family of foundation models from Anthropic, available through an API and a chat product, with five adjacent product surfaces. According to Anthropic's pricing page, Claude Sonnet sits around $3 per million input tokens and $15 per million output tokens (Anthropic, 2026). That is the unit you are buying when you buy Claude. Let me list the surfaces precisely.

The model API

Text in, text out, with optional tool use and structured outputs. The API supports the messages format, system prompts, tool definitions, and Computer Use as a beta tool. Documentation is at docs.anthropic.com.

Computer Use (October 2024)

A capability that lets Claude perceive screens and emit mouse and keyboard actions. Announced by Anthropic on 22 October 2024 (Anthropic, 2024). Computer Use is a tool the model can call. It does not include a runtime, a recovery layer, or a deployment story. You bring those.

Claude Code (CLI)

An agentic coding tool that runs inside the terminal, where Claude reads your codebase, writes diffs, runs tests, and iterates. Released in 2025. Claude Code is the cleanest example Anthropic ships of a real agent loop. It is also tightly scoped to the developer workstation.

Skills

A way to package instructions, scripts, and resources that Claude can load on demand. Skills make Claude reusable across tasks but they live inside Claude's surfaces (Claude.ai, Claude Code), not on a buyer's infrastructure.

MCP (Model Context Protocol)

An open protocol Anthropic introduced for connecting models to external tools and data sources (MCP spec, 2024). MCP is genuinely good. It is also a protocol, not a deployment. Someone has to host the MCP servers, manage credentials, and run the agent that uses them.

Anthropic's product strategy is to ship the engine and a reference body, then let an ecosystem build the cars. Claude Code is the reference body. Computer Use is the reference body. Skills and MCP are the wiring. The strategy is consistent with being a model company, not a vertical app company.

What Gravity actually is

Gravity is an agent platform: deployed, recurring, integrated agents that run for you 24/7 after you describe a task in plain language. The thesis is "describe outcome, not workflow". A 2024 McKinsey report estimated generative AI could automate 60-70% of knowledge-worker time across categories, but only when delivered as deployed automation rather than chat (McKinsey, 2024). That is the gap Gravity fills. Buyers describe an outcome. Gravity deploys an agent. The agent runs.

Across three prior startups, the same lesson kept showing up: buyers do not buy capabilities, they buy outcomes. A founder does not want an LLM API; they want their leads followed up. A small ops team does not want a tool layer; they want the invoice reconciliation done by Monday. The platform has to package the outcome.

What the platform layer adds

Six things, each of which is real infrastructure work. Deployment, so the agent runs on managed compute. Integrations, so the agent can read Gmail, Slack, Notion, Stripe, HubSpot without the buyer wiring OAuth. Memory, so the agent remembers what it did last Tuesday. Monitoring, so failures surface to a dashboard, not to a buyer reading logs. Recovery, so transient errors do not kill the run. Billing for non-engineers, so the cost is per task or per outcome, not per token.

For a fuller breakdown of these layers see how AI agents actually work and AI agent deployment models.

Where they overlap: Computer Use, Claude Code, Skills

Gartner forecast in 2024 that by 2028 around 33% of enterprise software will include agentic AI, up from less than 1% in 2024 (Gartner, 2024). That growth pressure is why Anthropic is shipping more agent-ish surfaces, and why those surfaces overlap with platforms. Three places the overlap is real.

Computer Use overlaps with browser-driving agents

If your use case is "drive a browser to fill in a form on a SaaS app that has no API", Computer Use can do that, and a deployed agent platform can also do that. The difference is the runtime. Computer Use needs a host machine, a container, a recovery loop, and a watchdog. A platform supplies those.

Claude Code overlaps with coding agents

Claude Code is a real agent loop scoped to a developer terminal. If your job is "automate refactors on my laptop", Claude Code is excellent and probably enough. If your job is "automate refactors as a service across 200 microservices on managed infrastructure", you are back in platform territory.

Skills overlap with platform task templates

A Skill in Claude packages instructions and resources for a task. A platform task template packages the same plus the deployment, the integrations, and the billing. The overlap is the packaging idea. The non-overlap is everything else.

Where they do not overlap: deployment, monitoring, ops, integrations, billing

The non-overlap is much larger than the overlap. According to Anthropic's own engineering blog on building agents, "much of the work in production is in the surrounding system, not the model" (Anthropic, 2024). That observation is from the company that makes Claude. Five places the platform layer is doing work the model cannot do.

Deployment

Claude does not deploy itself. There is no "Claude, run this every Monday at 9am forever" surface. A platform has a scheduler, a runtime, and a state store that survives restart.

Monitoring

Claude does not surface a dashboard of all running agents, last failure, cost per task, success rate, latency P95. The model API gives you logs of one call. The platform gives you an ops view across thousands of runs.

Integrations

Claude can call any tool you wire up. Wiring up Gmail OAuth, Slack OAuth, Stripe OAuth, HubSpot OAuth, Notion OAuth, and refreshing tokens when they expire is real engineering work. A platform amortises that across many buyers.

Recovery and retries

If a tool call fails because the SaaS rate-limited you, Claude will not know to back off and retry tomorrow. The agent loop has to handle that. See AI agent tool use for the failure modes around tool calls.

Billing non-engineers can read

Per-million-token pricing is right for engineers and wrong for SMB owners. A small business does not care that an agent used 2.3 million tokens; they care that it followed up with 14 leads and closed 3. The platform packages tokens into outcomes. See AI agent cost models.

Honest disclosure: Gravity uses Claude (and other models) as an engine

Gravity routes reasoning calls to multiple frontier models, including Claude. According to a 2025 Stanford AI Index report, 78% of enterprises using AI in production routed across at least two model providers for cost and reliability reasons (Stanford HAI, 2025). That is now standard architecture, and Gravity follows it.

Inside Gravity, the router picks the model per task based on three signals: capability fit (some tasks suit Claude's reasoning, others suit GPT or open-weights for cost), latency requirements, and price ceiling per task. Claude is heavily used for long-context reasoning and computer-use style work. Other engines pick up shorter or cheaper tasks. The router itself is one of the platform's deepest pieces of code.

Pretending otherwise would be silly. The agent platform business is not the foundation model business. Anthropic, OpenAI, Google, Mistral, and the open-weights ecosystem are running a different race with a different cost structure. A platform that tries to compete with all four loses on both axes. The honest framing is: we are not a Claude competitor; we are a Claude customer, and a customer of others, and we package the result.

When buying Claude API access is enough

Anthropic publicly disclosed in 2025 that Claude API revenue grew more than 10x year over year, with thousands of enterprise customers building their own agents directly on the API (Anthropic news, 2025). For many of those buyers, the API is genuinely enough. Three signals say "buy the API directly".

You have an engineering team that wants to own the loop

You have engineers. They want to write the orchestration, the tool layer, the memory store, the safety guardrails. They are evaluating Claude vs GPT vs open-weights on benchmarks. They want low-level control. Buy the API. See build vs buy for the deeper trade-off.

Your use case is well-scoped and stable

One workflow, one tool set, no integration sprawl. The engineering investment to wrap an agent around the API is bounded and pays back. You do not need a platform's flexibility because you do not need flexibility.

You are doing in-product LLM features, not standalone agents

If you are adding "summarise this document" to your SaaS, you are buying a model capability, not an agent. Claude API or any equivalent will do. You do not need a deployed long-running agent runtime for in-product features.

When you need an agent platform on top

A 2025 Forrester study found that 64% of business buyers who tried to roll their own LLM agents reported "longer than expected implementation timelines" and 41% abandoned the build (Forrester, 2025). The implication is clear. Three signals say "buy the platform".

The buyer is not an engineer

Founders, ops leads, marketers, salespeople. They want outcomes, not runtimes. They cannot wire OAuth. They will not maintain a prompt repo. They will not read CloudWatch logs. The platform exists to serve them.

You need recurring, deployed work, not one-off conversations

"Follow up with every new lead within 10 minutes, forever" is a deployed agent. "Help me write this one email" is a chat product. The first is platform territory. The second is Claude.ai.

You want one bill, one dashboard, one accountable layer

Multi-model routing, integrations, monitoring, recovery, audit, billing. You do not want to integrate ten vendors. The platform consolidates. See AI agent orchestration for what that consolidation looks like under the hood.

The framework I learned across three startups: when the buyer is precise about the outcome they want, the right purchase is usually a platform. When the buyer is precise about the capability they want, the right purchase is usually an API. "I want my leads followed up" is an outcome. "I want a 200k-context-window model that supports tool use" is a capability.

Frequently asked questions

Is Gravity a Claude competitor?

No. Claude is a family of foundation models from Anthropic. Gravity is an agent platform that uses Claude (and other frontier models) as one of its reasoning engines. The relationship is closer to engine and car than to two competing cars. You can buy Claude API access directly from Anthropic; you can also buy an agent product that runs on Claude. They solve different jobs.

Does Gravity use Claude under the hood?

Yes. Gravity uses Claude as one of its reasoning engines, alongside other frontier models routed per task. The agent platform layer handles deployment, tool integration, memory, monitoring, billing, and safety. Claude provides the reasoning step inside each iteration of the agent loop. Most serious agent platforms in 2026 use Claude or GPT or a mix, because building a frontier model is not the same business as running an agent.

What is Claude Computer Use and is it the same as an agent platform?

Claude Computer Use, launched in October 2024 by Anthropic, gives Claude the ability to perceive screens and emit mouse and keyboard actions. It is a capability of the model, not a deployed product. To use it in production you still need a runtime, a tool registry, monitoring, recovery logic, and billing. An agent platform supplies those layers. Computer Use is one tool the platform can wire in.

When should I just buy Claude API access instead of an agent platform?

Buy Claude API directly when you have an engineering team, a clear use case, and you want to own the agent loop. You will write the orchestration, the tool layer, the memory store, the safety guardrails, and the monitoring. You will pay per token to Anthropic and bear the maintenance. This works well for builders. It works poorly for non-engineers who want outcomes.

When do I need an agent platform on top of Claude?

When the buyer is a non-engineer who wants an outcome, not a runtime. When you need a deployed long-running agent with integrations across SaaS tools, monitoring, recovery, retries, audit logs, and per-task billing. When the team does not want to maintain prompt logic, model routing, or the agent loop. The platform pays for itself the first time an agent recovers from a failure mode you did not write.

Three takeaways before you close this tab

- Claude is a model; Gravity is a platform. Not substitutes. Different categories.

- Gravity uses Claude as one engine, alongside other frontier models, routed per task.

- The right purchase depends on the buyer. Engineers wanting capability buy the API. Non-engineers wanting outcomes buy the platform.

Sources

- Anthropic, "Introducing computer use, a new Claude 3.5 Sonnet, and Claude 3.5 Haiku", 22 October 2024, anthropic.com/news/3-5-models-and-computer-use

- Anthropic, "Building Effective Agents", retrieved 2026-05-14, anthropic.com/engineering/building-effective-agents

- Anthropic, "Pricing", retrieved 2026-05-14, anthropic.com/pricing

- Anthropic, "Documentation", retrieved 2026-05-14, docs.anthropic.com

- Anthropic, "News", retrieved 2026-05-14, anthropic.com/news

- Model Context Protocol specification, retrieved 2026-05-14, modelcontextprotocol.io

- McKinsey, "The economic potential of generative AI: The next productivity frontier", 2024, mckinsey.com

- Gartner, "Top Strategic Technology Trends for 2025", 22 October 2024, gartner.com

- Stanford HAI, "AI Index Report", 2025, aiindex.stanford.edu

- Forrester research overview, 2025, forrester.com/research

- Aryan Agarwal, "Gravity platform architecture", internal v1, May 2026, About