Selling on Amazon is mostly the act of reading reviews and responding to them. The reviews tell you whether the product is right, whether the listing is right, whether the packaging survived FBA, and whether your seller rating will be high enough to keep the buy box next quarter. A seller with 200 SKUs and a small team checks reviews on Monday morning, gets through 30 percent of the inbox, and triages the rest reactively when a star rating drops or a flag fires in Seller Central.

An AI agent for Amazon seller review monitoring does the daily pass that would take the seller three hours if they sat down to do it. It reads everything, classifies, drafts, and surfaces. The seller spends 20 minutes a day reviewing drafts and posting responses in their own voice. The reviews that need product-team attention bubble up because three of them point at the same defect. The suspected fakes go to a separate list to be reported through Amazon's mechanism.

What this agent does

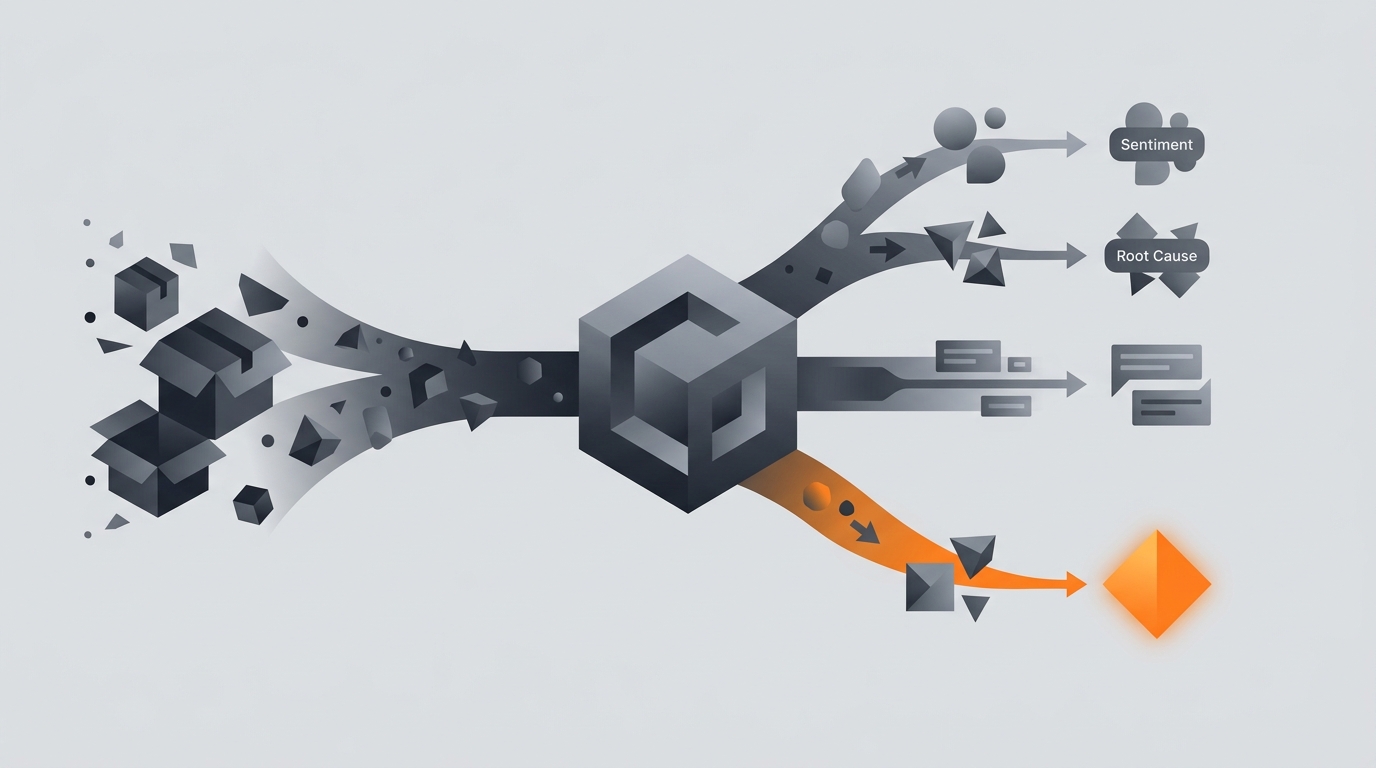

The agent polls each configured ASIN through the Amazon Selling Partner API once a day. For each new review since the last poll, it pulls the star rating, review text, reviewer history, verified-purchase flag, and any seller-feedback metadata. It runs the sentiment classifier and the root-cause classifier. It drafts a seller response that matches the sentiment and the root cause. It logs everything into a daily digest grouped by ASIN and by bucket.

What the agent does not do: it does not post responses, does not report reviews to Amazon, does not message reviewers, does not change listing copy or product photos, does not pause SKUs. The write surface is zero. The agent produces a daily document that the seller acts on.

For more on read-then-recommend patterns, see what an AI agent can actually do.

Sources of truth

- Amazon Selling Partner API. Reviews endpoint, product detail endpoint, FBA inventory endpoint for shipping context.

- Your product detail pages. Title, bullets, photos, A+ content. Used to check whether a review's complaint matches what was promised on the listing.

- Your category baseline. The agent maintains a running average of star rating, complaint distribution, and review velocity per ASIN. Spikes against baseline are flagged.

- Output: a daily digest with drafted responses, root-cause clusters, and a suspected-fakes list.

The agent does not read Amazon's seller-internal metrics dashboards. It does not browse competitor listings. The reviews are the unit. For the broader rationale, see how to limit agent actions.

The seven root-cause buckets

Each negative review is classified into one of seven buckets. The bucket drives operational signal more than the star rating does.

- Product defect. The product malfunctioned, broke, or did not work as designed. Examples: "stopped working after 3 days", "missing a piece", "the seam came apart". Three in 7 days on one ASIN is a manufacturing review.

- Packaging or shipping damage. Arrived crushed, leaking, or in a damaged box. Three in 7 days is a 3PL or packaging review, not a product review.

- Sizing or fit. Wrong size, runs small, does not fit the device it claimed to fit. Frequent for apparel and accessories; the response is usually a listing-copy fix, not a product fix.

- Expectations mismatch. The reviewer expected a different feature, colour, or capability based on the listing. The fix is in the listing photos or bullets, not the product.

- Accessory or compatibility issue. The product is fine but the customer's setup is wrong. The fix is usually a clearer bullet about what is and is not compatible.

- Customer error. The reviewer used the product wrong. The drafted response is empathetic; the fix is sometimes a clearer instruction in the box or in the A+ content.

- Unclassifiable. The review is too short, too vague, or in a language the classifier is not confident in. Surfaced for the seller to read directly.

The buckets feed an operational dashboard: clusters of one bucket on one ASIN trigger a flag in the daily digest. The seller knows whether to ping the manufacturer, the 3PL, the listing team, or no one.

Suspected fake review detection

Fake reviews are a real category. The agent flags them with caution; false positives damage trust with Amazon and with genuine customers.

Three signals must align. One signal alone is too noisy. The seller sees the flagged review only when at least two of three agree.

- Reviewer-account signals. Account is under 30 days old, has reviewed more than 10 products in the past 14 days, or has a clustering pattern with other suspicious accounts (same wording, same review-burst timing).

- Review-text signals. Mentions a competitor product by name in a way that reads promotional, contains phrases from a known fake-review template, or makes claims that are false against the product detail page.

- Verified-purchase signal. The reviewer is not a verified purchaser, but the review is detailed enough that they probably should be. (Verified-purchase status alone is not used as a primary signal because gift orders and resold inventory legitimately produce non-verified reviews.)

The suspected fake goes onto a separate list in the daily digest with the supporting signals shown. The seller decides whether to report through Amazon's standard mechanism. The agent does not report.

Guardrails

- Zero write to Amazon. No responses posted, no reviews reported, no listing edits, no messages to customers.

- Drafts only. Every response is a draft in the digest. The seller posts in Seller Central.

- Two-signal minimum on fake flagging. One signal alone is noisy.

- Daily cap on flagged fakes per ASIN. If the agent finds more than 10 suspected fakes in one day on one ASIN, something else is happening (review bombing, classifier error). The digest surfaces the count and asks the seller to investigate manually.

- Audit log of every classification. Seller can override and the override goes back into the classifier's calibration over time.

- Region-specific category baselines. The agent maintains baselines per marketplace; a 4.2 average on amazon.com is a different signal than a 4.2 on amazon.in.

- No customer PII retained. The agent processes reviewer names for classification but does not store them beyond the run. Compliance with Amazon's seller-data policy is the floor.

For the broader safety frame, see AI agent safety and guardrails.

Common mistakes

- Auto-posting responses. Amazon's review-response system is a customer-facing signal. A boilerplate auto-response damages brand more than no response at all.

- Treating star rating as the operational metric. The bucket distribution tells you more than the average rating. A 4.3-rated SKU with three defect reviews in 7 days is more urgent than a 3.9-rated SKU with consistent expectations-mismatch reviews.

- Aggressive fake reporting. Amazon penalises sellers who false-flag. A two-signal floor and a manual seller decision is correct. Resist the temptation to auto-report.

- Ignoring positive reviews entirely. A positive review that mentions a competitor switch or a price anchor is operational signal. Surface the few that matter.

- Cross-ASIN aggregation without category awareness. A "fit issue" on apparel is not comparable to a "fit issue" on a phone case. Cluster within ASIN, not across.

- Skipping the daily cadence. Weekly is too late on Amazon. By the time you respond to a 1-star review a week later, the customer is gone and the review has compounded against your buy box.

Frequently asked questions

Can an AI agent monitor reviews on my Amazon listings?

Yes. The agent polls each of your ASINs daily via the Amazon Selling Partner API, pulls new reviews, classifies each one by star rating, sentiment, and root cause (product defect, shipping, sizing, expectations mismatch), drafts a seller response, and surfaces suspected fakes or review-policy violations. It does not post responses without you. Seller responses appear in your voice; the agent only stages the draft.

What is a root-cause classification?

The agent groups each negative review into one of seven buckets: product defect, packaging or shipping damage, sizing or fit, expectations mismatch (listing photo or copy issue), accessory or compatibility issue, customer-error, and unclassifiable. The classification drives the operational signal you actually need. Three product-defect reviews on the same ASIN in 7 days is a manufacturing issue. Three shipping-damage reviews is a 3PL or packaging issue. The buckets tell you which team to ping.

Does the agent post seller responses on Amazon?

Not on its own. The agent drafts the response, the seller reviews and edits, then the seller posts via Seller Central. Amazon's review-response feature is a real customer-facing signal; an automated tone-deaf response is worse than no response. The agent's job ends at handing you a draft that needs at most 30 seconds of editing.

How does it spot fake reviews?

Three signals. The reviewer's account is days old and reviews multiple unrelated competitor products in the same week. The review text mentions a competitor by name in a way that reads as paid placement. The review's claim is provably false against your product detail page (wrong color, wrong size, wrong feature). The agent surfaces the suspected fake for you to decide whether to report through Amazon's mechanism. It never reports automatically.

What about positive reviews?

Positive reviews get a simple thank-you draft and a flag if they mention something operationally interesting (a specific use case you did not market for, a competitor's product they switched from, a price-anchor signal). Most positive reviews need no response. The agent surfaces only those that move the operating loop.

Three takeaways before you close this tab

- Bucket the reviews; the bucket tells you who to ping.

- Drafts, never auto-post. Seller responses live in your voice.

- Two-signal minimum on fakes. Resist the temptation to auto-report.

Sources

- Amazon Developer, "Selling Partner API: Reviews and Catalog endpoints", retrieved 2026-05-13, developer-docs.amazon.com sp-api

- Amazon Seller Central, "Community guidelines for customer reviews", retrieved 2026-05-13, sellercentral.amazon.com help

- FTC, "Endorsement and review guidelines", retrieved 2026-05-13, ftc.gov endorsement guides

- Amazon, "Brand Protection Report 2024 (transparency report)", retrieved 2026-05-13, aboutamazon.com policy news

- Marketplace Pulse, "Amazon reviews and seller ratings research", retrieved 2026-05-13, marketplacepulse.com