The word "agentic" carries more weight than it deserves. Strip the jargon and what is left is a five-piece checklist: goals, perception, planning, action, learning. A system that has all five connected is agentic. A system missing any of the five is not. This post defines each piece in plain English, walks through a worked example, and shows what the loop looks like running for real.

The definitional anchor used here matches Anthropic's published guidance: agentic systems are ones where the LLM dynamically directs its own processes and tool usage (Building Effective Agents, retrieved 2026-05-07). The same framing appears in academic literature dating back to the ReAct paper (Yao et al., 2022): reasoning interleaved with acting. The vocabulary is settled enough that buyers can use it without anxiety.

What the word actually means

Agentic, in the AI sense, describes a system that exhibits agency: the capacity to pursue goals over time. The word is borrowed from social sciences where it means much the same thing for humans. In AI, the working definition is operational: a system is agentic when it can perceive state, decide on a next step, act, and adjust based on outcomes, without per-step human approval.

The word is gradient. A spell-checker that suggests one correction is barely agentic; an LLM that runs a five-step task with tool use and error recovery is more agentic; a long-horizon system that maintains state for days and recovers from upstream failures is highly agentic. The five-axis framework in autonomous vs assistive AI scores the gradient explicitly.

The five pieces, in plain English

- Goals. What success looks like. Specific enough that the system can tell whether it is finished. "Send a follow-up email to leads who have not replied in five days" is a goal. "Be helpful" is not.

- Perception. Reading current state. For a sales agent, that means querying the CRM to see who has not replied. For a code agent, reading the file. Perception is rarely glamorous and frequently broken.

- Planning. Producing a sequence of steps that, if executed, achieves the goal. Sometimes the LLM plans token-by-token (implicit). Sometimes a planner module emits explicit steps. The difference matters for debugging.

- Action. Calling tools to execute steps. Action is what separates a chatbot from an agent. The agent does not just talk about sending the email; it sends the email. See tool use.

- Learning. Updating behaviour based on outcomes. Within a task: replanning when a step fails. Across tasks: improving the prompt or the tool catalogue based on what worked. Learning is where most production systems are weakest.

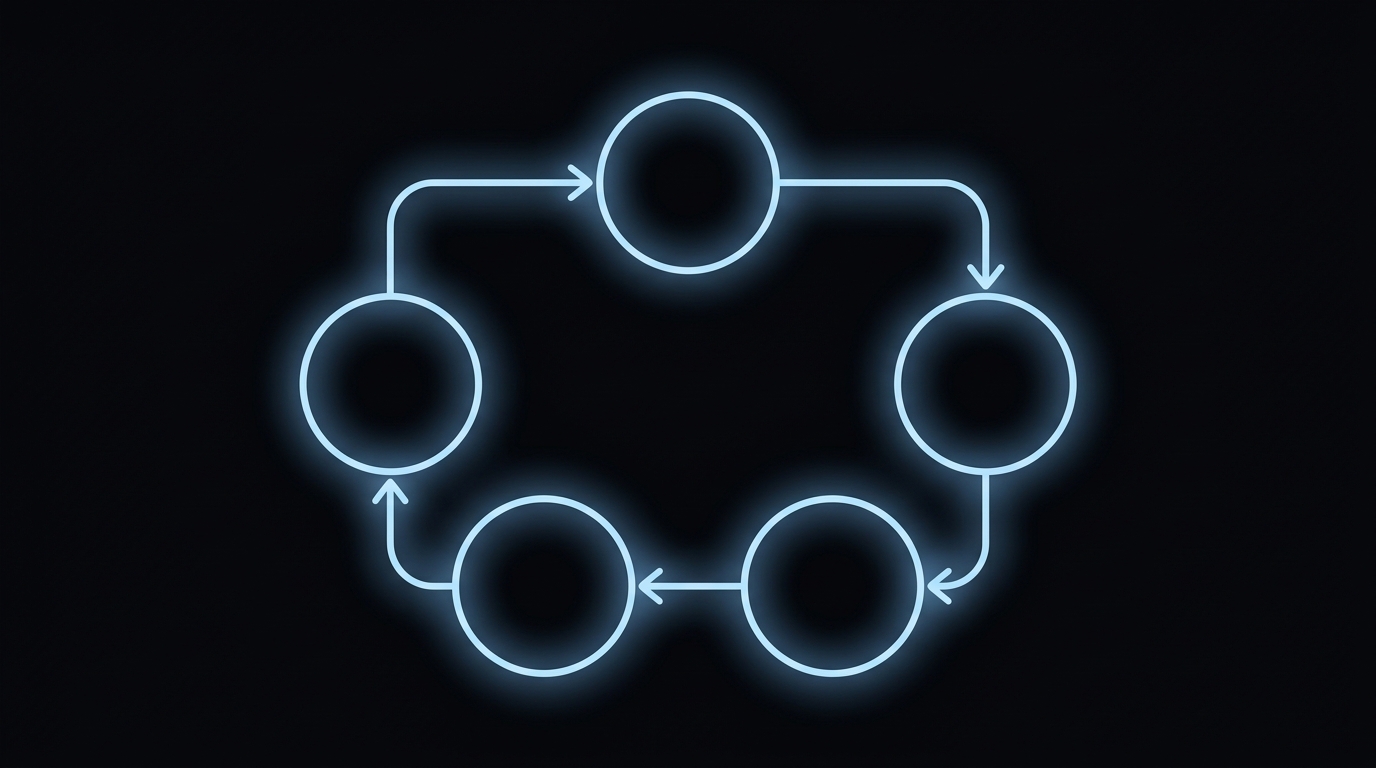

The five are not a sequence; they are a loop. Goal stays constant; perception, planning, action, and learning cycle each step. The loop runs until the system thinks the goal is met (and a verification step confirms it) or until it hits a step limit and escalates.

A worked example: a follow-up email agent

Goal: send a follow-up email to leads who have not replied in five days. Perception: query the CRM, return a list of unresponsive leads. Planning: for each lead, draft an email referencing the original conversation. Action: call the CRM and email-send tools. Learning: if a lead replies "unsubscribe", update the do-not-email list and skip them next run. All five present, all five connected.

What can go wrong: perception returns a stale list (the CRM is out of date); planning produces a generic email (the agent did not actually read the original conversation); action sends to the wrong address (the email field had a typo); learning never updates the do-not-email list (the unsubscribe handler was not wired). Each gap creates a different failure mode. The 80-test methodology is built to surface exactly these gaps.

The example also shows where "agentic" is more than marketing. A non-agentic version of this task is a templated email blast triggered by a workflow. The agentic version reads the conversation, drafts a contextual reply, sends it, and updates state. The two products feel different in production even when both technically "send follow-up emails."

Agentic vs autonomous vs assistive

Agentic describes the behaviour pattern: goal-directed, tool-using, adaptive. Autonomous describes the supervision level: per-step approval (assistive) versus no approval (autonomous). A system can be agentic but supervised. A system can be narrowly autonomous on a single task without being broadly agentic. The terms overlap but are not synonyms. The cluster post on autonomous vs assistive AI covers the supervision spectrum in detail.

The pragmatic implication for buyers: ask vendors to describe the behaviour pattern (agentic or not) and the supervision level (autonomous or assistive) separately. A system that is assistive but agentic (a coding copilot that plans across multiple files) is a different product from a system that is autonomous but barely agentic (a workflow trigger with a hard-coded rule set). Procurement decisions hinge on the distinction.

What agentic AI looks like in production

The production loop is unglamorous. The agent reads the goal once at start. It runs perception each step. It plans the next step using current state plus the goal. It acts via a tool call. It logs the outcome and updates whatever state matters. It checks whether the goal is met. If not, it loops. If it cannot recover from an error, it escalates. The loop is the same shape on a follow-up email task and on a multi-step research task; the tools and the goal change.

The reliability properties live in the gaps between the five pieces. A weak link in perception breaks the whole loop. A weak link in learning prevents the system from improving. The reason production agents fail in ways that demos do not is that production environments stress every link, not just the most-tested one. The product framing in describe outcome, not workflow exists because outcome-described tasks force the loop to actually close, which is what surfaces weak links.

Frequently asked questions

What does agentic mean in AI?

Agentic describes AI systems that pursue goals over time by perceiving state, planning steps, taking actions, and adjusting based on results. Anthropic defines agentic systems as those where the LLM dynamically directs its own processes and tool usage. The word is gradient, not binary; systems are more or less agentic on five autonomy axes.

What are the five components of agentic AI?

Goals, perception, planning, action, and learning. Goals define what success looks like. Perception reads current state. Planning produces a sequence of steps. Action calls tools to execute steps. Learning updates the system based on outcomes. All five must be present and connected for behaviour to qualify as agentic in any meaningful sense.

How is agentic AI different from a chatbot?

A chatbot responds to one input at a time without persistent goals or autonomous tool use. An agentic system holds a goal across multiple steps, calls tools to act on the world, and updates its plan based on what happens. The chatbot is a single function call; the agentic system is a loop with state.

Is agentic AI the same as autonomous AI?

Closely related but not identical. Agentic describes the behaviour pattern (goal-directed, tool-using, adaptive). Autonomous describes the level of human supervision (per-step approval vs no approval). A system can be agentic but supervised; a system can be autonomous on a narrow task without being broadly agentic. Most products in 2026 sit somewhere in the middle.

What does agentic AI look like in production?

A loop: read goal, read current state, choose next step, call a tool, parse the result, decide whether to continue, repeat until done or escalate. The loop runs reliably when the five components (goals, perception, planning, action, learning) are well-specified and the reliability discipline is in place. The 80-test methodology is the operational expression of that discipline.

Three takeaways before you close this tab

- Five pieces, all connected. Goals, perception, planning, action, learning.

- Missing any one breaks the loop. Most production failures live in perception or learning.

- Agentic is gradient. Score systems on the five-axis autonomy framework, not on the word.

Sources

- Anthropic, "Building Effective Agents", retrieved 2026-05-07, anthropic.com/engineering/building-effective-agents

- Yao et al., "ReAct: Synergizing Reasoning and Acting in Language Models", arXiv:2210.03629, 2022, retrieved 2026-05-07, arxiv.org/abs/2210.03629

- Mialon et al., "GAIA: A Benchmark for General AI Assistants", arXiv:2311.12983, 2023, retrieved 2026-05-07, arxiv.org/abs/2311.12983

- Russell & Norvig, "Artificial Intelligence: A Modern Approach", 4th edition, 2020, agent architecture chapter

- NIST, "AI Risk Management Framework", retrieved 2026-05-07, nist.gov/itl/ai-risk-management-framework